Google Cloud Associate Cloud Engineer (ACE) Study Guide

Note: These are my personal study notes that I am using to prepare myself for the Google Cloud Associate Cloud Engineer (ACE) certification exam.

Read the Book

The notes in this repository are compiled into a highly readable, searchable online book using mdBook.

Read the live study guide here

e-book

If you preffer the offline mode you can download epub file.

Project Structure

The study material is organized into specific Google Cloud services and concepts. All source material is written in Markdown and located in the src/ directory. Key areas covered include:

- Compute Services: Compute Engine, GKE, Cloud Run, App Engine, Cloud Functions.

- Storage & Databases: Cloud Storage, Cloud SQL, Cloud Spanner, BigQuery, Firestore, Bigtable, Memorystore.

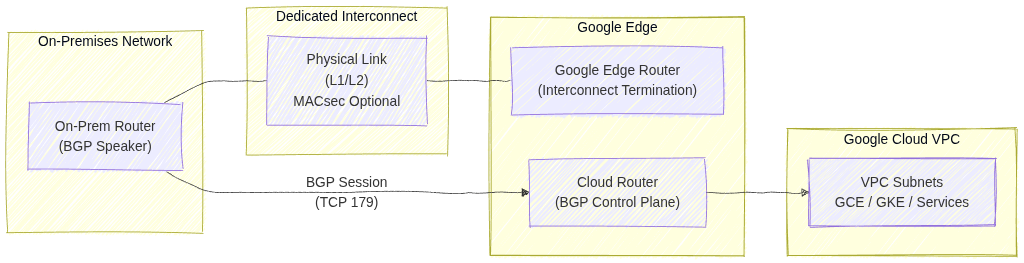

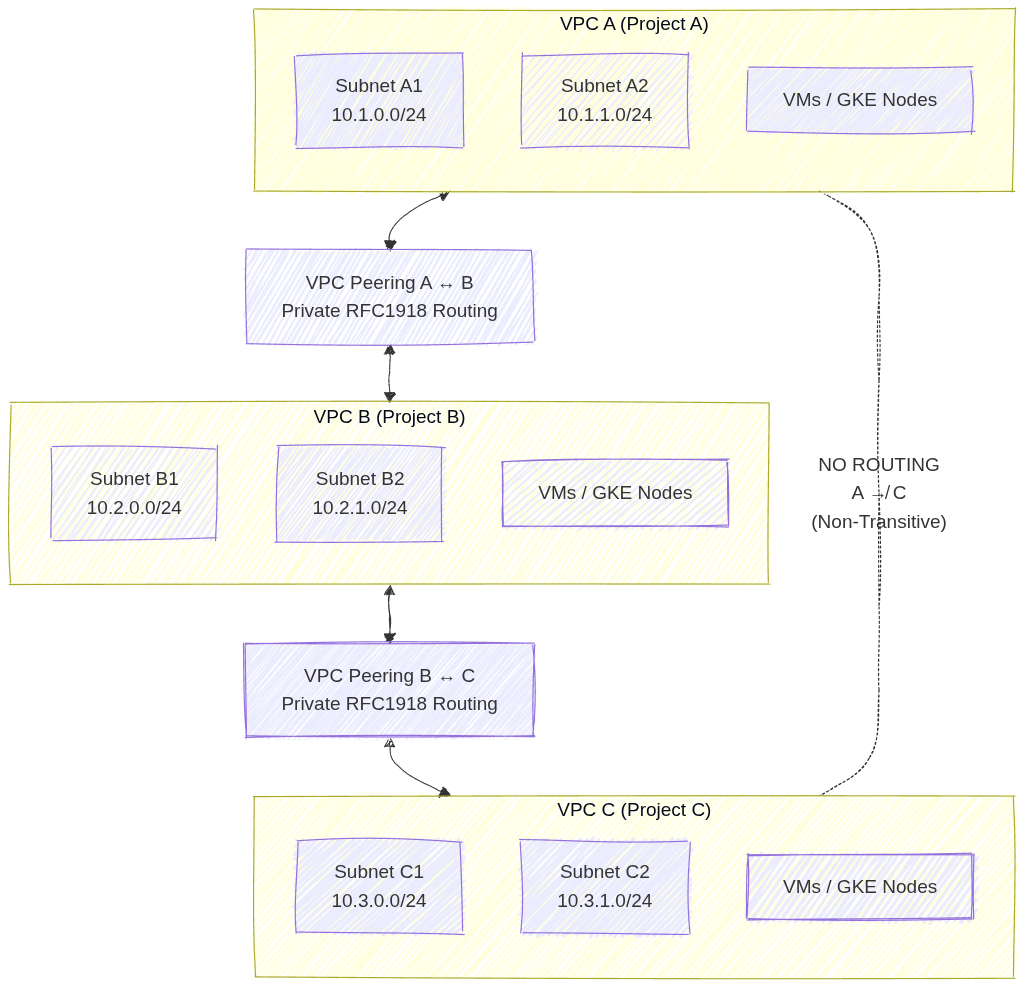

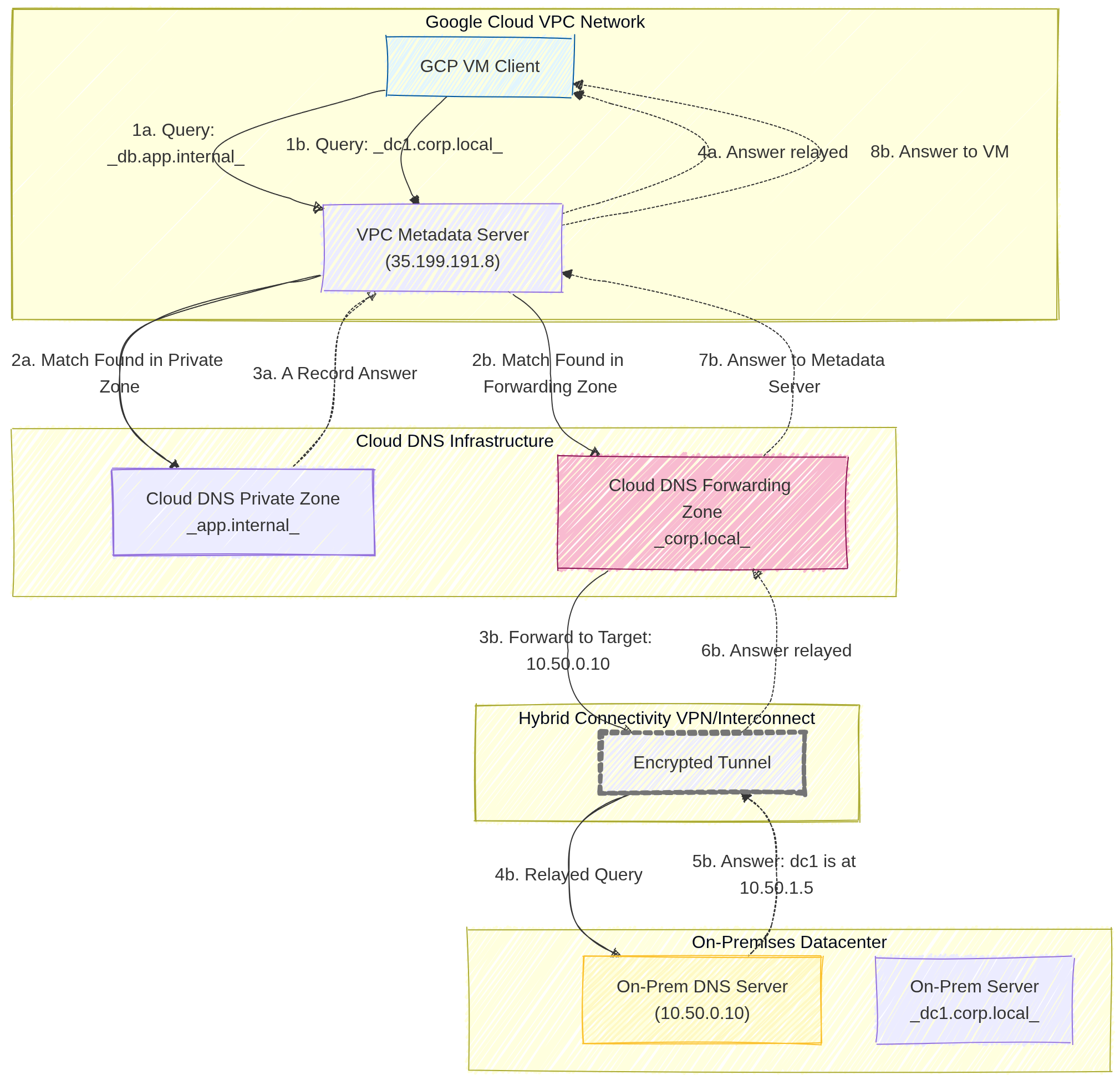

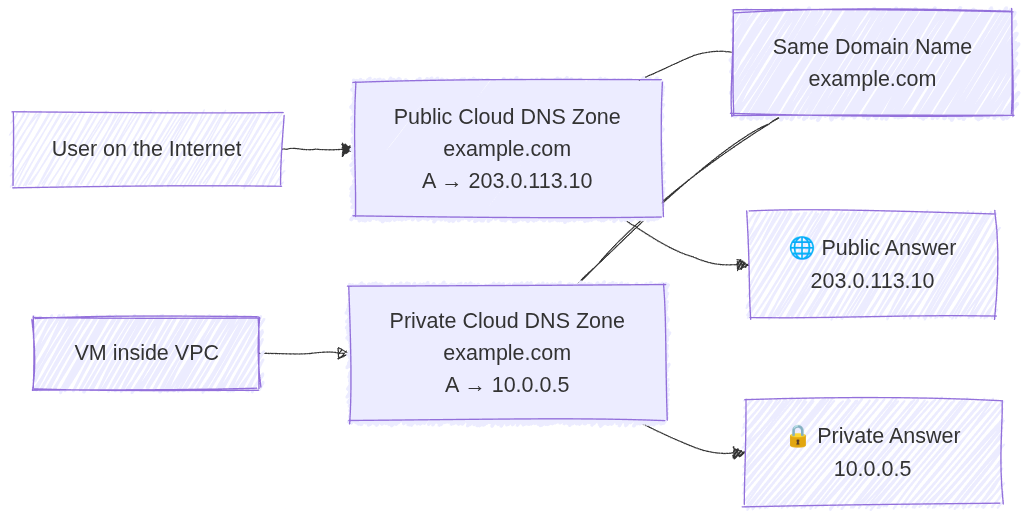

- Networking: VPC Networks, Load Balancers, Cloud DNS, Hybrid Connectivity (Cloud VPN, Cloud Interconnect).

- Operations & Security: IAM, Cloud Logging, Cloud Monitoring, VPC Service Controls, Cloud Armor, Secret Manager.

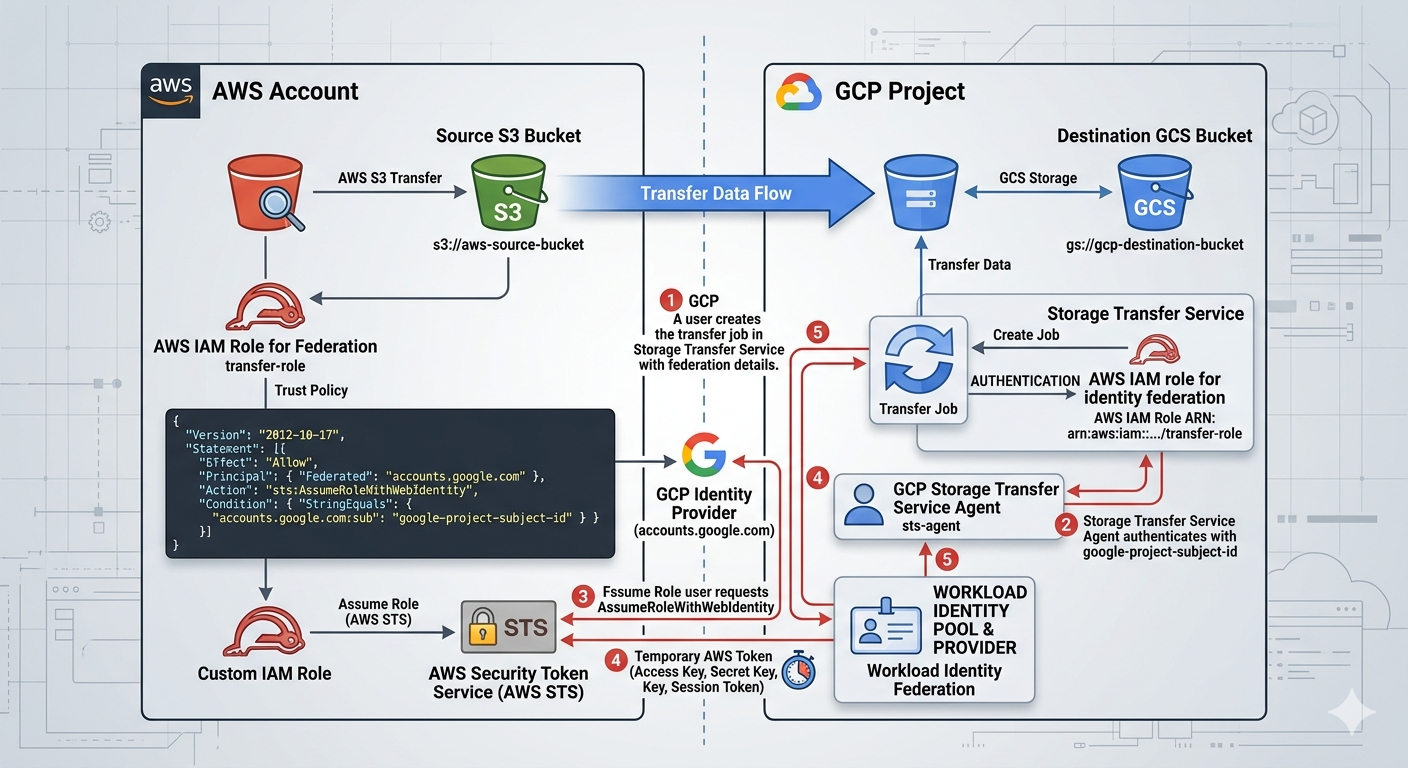

- Migration Tools: Migrate to Virtual Machines, Database Migration Service, Storage Transfer Service.

Building Locally

If you want to run this book locally to study offline or modify the notes, you will need the mdBook command-line tool.

- Install mdBook (requires the Rust toolchain):

cargo install mdbook mdbook-epub - Serve the book locally:

This compiles the Markdown files and opens a local web server at http://localhost:3000 with hot-reloading enabled.mdbook serve --open

Content Generation

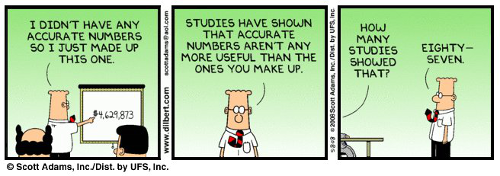

The core content and technical facts within these Markdown files were initially structured and generated with the assistance of AI, then curated, reviewed, and formatted specifically for this mdBook layout.

Mock Tests

License

This project is licensed under the GNU General Public License v3.0 (GPLv3).

You are free to use, modify, and distribute this study guide, provided that any modifications or derivative works are also distributed under the same open-source GPLv3 license. See the LICENSE file for more details.

Compute Services

Image source: Google Cloud Documentation

Compute Engine

Infrastructure as a Service (IaaS) providing fully customizable Virtual Machines (VMs). Best for legacy apps, custom OS requirements, or high-performance databases.

Google Kubernetes Engine (GKE)

Managed Kubernetes for orchestrating containerized applications. Choose Autopilot for a fully managed experience or Standard for full node-level control.

Cloud Run

Fully managed serverless platform for running request-aware containers. Features scale-to-zero, integrated traffic splitting, and support for sidecars.

Cloud Functions

Event-driven serverless platform for executing small snippets of code (glue logic). Ideal for processing GCS uploads, Pub/Sub messages, or Firestore triggers.

App Engine

Platform as a Service (PaaS) for building web apps and APIs. Available in Standard (sandboxed, fast scaling) and Flexible (Docker-based, custom runtimes) environments.

Compute Engine: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. Compute Engine Overview

Compute Engine is Google Cloud’s Infrastructure as a Service (IaaS) offering, providing customizable Virtual Machines (VMs).

- Machine Families (2026 Standards)

- General-purpose: Best price-performance. Includes E2, N2, and the new N4 (optimized for modern workloads with flexible sizing).

- Compute-optimized: High performance per core. Includes C2, C3, and C4 (the latest generation for high-performance computing).

- Memory-optimized: High memory/vCPU ratio. Includes M1, M2, and M3.

- Accelerator-optimized: GPUs attached (e.g., A2, A3).

- Custom Machine Types: Variable vCPU and RAM configurations when preset types don’t fit your needs.

2. Pricing and Discounts

- Cost of Stopped VMs: If you stop a VM, you stop paying for CPU and RAM, but you still pay for attached Persistent Disks and any reserved Static External IPs.

- External IPs:

- Ephemeral: Automatically assigned when VM starts, released when VM stops/deletes

- Static: Reserved IP address that persists independently of VM lifecycle (incurs charges when unused)

- Sustained Use Discounts (SUD): Automatic discounts for running instances for a significant portion of the month (N1, N2).

- Committed Use Discounts (CUD): 1 or 3-year commitment for a predictable workload.

- Spot VMs: Up to 91% discount. These can be terminated by Google at any time with a 30-second notice. Best for fault-tolerant, stateless batch jobs.

- Use shutdown scripts to handle graceful termination and save state.

- Preemption of a Spot VM is called a preemption, not a system crash.

- Reservations: Ensure resources are available when needed. Often used with CUDs to guarantee capacity.

3. Instance Templates and Managed Instance Groups (MIGs)

- Instance Templates: Immutable resources that define VM properties (machine type, image, labels). Used to create MIGs.

- Managed Instance Groups (MIGs): A collection of identical VMs that offer high availability and scalability.

- Auto-healing: Automatically recreates VMs that fail health checks.

- Auto-scaling: Dynamically adds or removes VMs based on CPU utilization, load balancing capacity, or custom metrics.

- Regional MIGs: Highly recommended for production as they distribute VMs across multiple zones in a region.

Live migration is the process of moving a running VM from one physical host to another without downtime. Google uses this for infrastructure maintenance, allowing your VMs to keep running during host updates. It requires no action from you.

4. Persistent Disks, Snapshots and Images

- Persistent Disks (PD): Durable network storage. You can resize a disk up but never down.

- Disk Types:

- Standard PD: HDD-based, cost-effective for sequential read/write workloads

- SSD PD: Higher IOPS and throughput for demanding workloads (databases, apps)

- Hyperdisk: Independent performance scaling (see Section 4.1)

- Disk Encryption:

- Google-managed: Default, encryption handled by Google

- Customer-Managed Keys (CMEK): You control keys in Cloud KMS

- Customer-Supplied Keys (CSEK): You provide and manage encryption keys

- Snapshots: Incremental backups of disks, stored globally. Best for disaster recovery.

- Custom Images: A Gold Master boot disk with your OS and software pre-installed. Best for consistent deployments in MIGs.

- Local SSD: Physical drives attached directly to the host. Data is ephemeral and lost if the VM is stopped or deleted.

You can attach up to 24 local SSDs to a single VM, depending on the machine type. Each local SSD is 375 GB, providing up to 9 TB of local SSD storage per VM. Local SSDs provide high-performance ephemeral storage.

4.1. Hyperdisk

High-performance block storage with independent scaling of performance and capacity.

- Hyperdisk Balanced: SSD-like performance at lower cost. Good balance of price and performance.

- Hyperdisk Extreme: Ultra-high throughput and IOPS for demanding workloads (databases, AI/ML training, HPC).

- Performance scales independently from capacity (unlike standard Persistent Disks).

- Can be attached to sole-tenant nodes and used with MIGs.

5. Sole-Tenant Nodes

Dedicated, single‑tenant physical servers in Google Cloud that run only your project’s Compute Engine VMs. They provide hardware‑level isolation by ensuring no other customer’s workloads share the same underlying host.

Primary Use Cases

Regulatory or compliance requirements that mandate physical isolation (e.g., healthcare, finance, government). Security boundaries where you must avoid multi‑tenant hardware for risk or policy reasons. Bring‑Your‑Own‑License (BYOL) scenarios for software that is licensed per physical core, socket, or host. Workload placement control, such as pinning specific VMs to specific hardware types.

Node Groups & Placement

Nodes are organized into node groups, which act as pools of dedicated hosts. VMs use node affinity/anti‑affinity rules to control placement, ensuring they land on the correct physical nodes. You can enforce strict placement (must run on a specific node type) or preferred placement (try this node type first). Useful for keeping related workloads together or separating sensitive workloads across different hosts.

6. Connecting to Instances

- SSH Access:

gcloud compute ssh [VM_NAME]- Uses a direct SSH connection to the VM’s public IP

- Requires the VM to have an external IP

- Firewall must allow TCP on port

22from your client - Your machine connects over the public internet

- Identity-Aware Proxy (IAP):

gcloud compute ssh VM_NAME --zone=ZONE --tunnel-through-iap- Uses IAP TCP Tunneling (Zero‑Trust access)

- Works even when the VM has no external IP

- Requires IAM role:

roles/iap.tunnelResourceAccessor - Firewall must allow TCP on port

22from IAP’s IP range35.235.240.0/20 - SSH traffic goes through Google’s secure IAP tunnel to the VM’s internal IP

7. Service Accounts and Metadata

- Service Accounts: VMs use these to authenticate to other Google Cloud services (GCS, BigQuery). Always use custom service accounts with Least Privilege for production.

The default Compute Engine service account

PROJECT_NUMBER-compute@developer.gserviceaccount.comis automatically created and has the Editor role on the project. It is automatically attached to new VMs unless you specify a different service account or disable it. - Service Account Scopes: Control what APIs the service account can access

- Project-wide: Applies to all VMs using the default service account

- Instance-level: Set per-VM for granular control

- Metadata: Used to pass configuration data. Startup scripts are automated scripts that run every time the VM boots.

- Metadata Server: Accessible at

http://metadata.google.internal/computeMetadata/v1/.

7.1. VM Security and Availability

- Shielded VMs: Hardened VMs with security features to protect against boot-level malware/rootkits

- Secure Boot: Blocks untrusted boot loaders and drivers

- vTPM: Virtual Trusted Platform Module for key storage and measurement

- Integrity Monitoring: Verifies VM boot chain hasn’t been compromised

- Confidential Computing: Encryption at runtime using AMD SEV-SNP. Protects data while it’s being processed.

- Availability Policies:

- On-host maintenance: Controls behavior during host maintenance (Migrate/Terminate)

- Automatic restart: Whether GCP restarts VM after unexpected failure

- Provisioning model: Standard vs Spot (affects pricing and preemptibility)

- GPUs Available: T4, A100, H100. Each has specific licensing requirements and zone availability.

8. Essential gcloud Commands

- Create a VM:

gcloud compute instances create [NAME] --zone=[ZONE] --machine-type=[TYPE] - Resize a MIG:

gcloud compute instance-groups managed resize [NAME] --size=[NEW_SIZE] - List Instances:

gcloud compute instances list

9. Exam Tips

- Preemption: If a Spot VM is terminated, it is a preemption, not a system crash.

- Zonal vs. Regional MIG: Choose Regional MIG for the highest availability.

- Metadata Header: Requests to the metadata server require the header

Metadata-Flavor: Google. - Machine Type Selection: If a question asks for the best cost-performance for a general workload, consider E2 or N4. For high-performance databases, consider C4 or M3.

10. External Links

GKE: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. GKE Fundamentals

Google Kubernetes Engine (GKE) is a managed environment for deploying, managing, and scaling containerized applications using Google infrastructure.

- Managed Kubernetes: Google manages the Kubernetes Control Plane, while you manage worker nodes in Standard mode.

- Cluster Types:

- Autopilot: The default and recommended mode for 2026. Fully managed; Google manages nodes, scaling, and security. You pay only for running pods.

- Standard: You manage the node infrastructure. Full control over nodes, SSH access, and custom machine types.

2. Cluster Configurations

- Regional Clusters: Control Plane and nodes replicated across multiple zones. Higher availability (99.95% SLA).

- Zonal Clusters: Control Plane and nodes in a single zone. Less expensive (99.5% SLA).

- Private Clusters: Nodes have internal IP addresses only. Communication with Control Plane via VPC peering. Requires Cloud NAT for outbound internet access.

3. Node Management and Scaling

- Node Pools: A group of nodes with the same configuration. Support for N4 (general purpose) and C4 (compute optimized) machine types in 2026 for optimized performance.

- Cluster Autoscaler: Automatically adds or removes nodes based on resource demands.

- Horizontal Pod Autoscaler (HPA): Scales pod replicas based on CPU or custom metrics.

- Vertical Pod Autoscaler (VPA): Adjusts CPU and memory reservations for pods.

Deployment → Manages app lifecycle: rolling updates, rollbacks, scaling. Creates and controls ReplicaSets. This is a recommented way to run stateless apps in GKE.

apiVersion: apps/v1 kind: Deployment metadata: name: my-app spec: replicas: 3 strategy: type: RollingUpdate rollingUpdate: maxSurge: 1 maxUnavailable: 1 selector: matchLabels: app: my-app template: metadata: labels: app: my-app spec: containers: - name: app image: nginx:1.25 ports: - containerPort: 80

ReplicaSet → Ensures a fixed number of Pods are running. Usually not used directly. Managed (created automatically) by Deployments.

apiVersion: apps/v1 kind: ReplicaSet metadata: name: my-app-rs spec: replicas: 3 selector: matchLabels: app: my-app template: metadata: labels: app: my-app spec: containers: - name: app image: nginx:1.25

GKE → Use Deployments for stateless workloads. ReplicaSets are created automatically.

4. GKE Networking

-

Services:

- ClusterIP (default)

- Internal-only virtual IP.

- Accessible only inside the cluster.

- Used for pod‑to‑pod communication.

ClusterIP Service Definition for Internal Traffic

apiVersion: v1 kind: Service metadata: name: my-clusterip-service spec: type: ClusterIP selector: app: my-app ports: - port: 80 # service port targetPort: 8080 # container port- NodePort

- Opens port

30080on every node. - Accessible via

http://<node-ip>:30080. - Still load‑balances across pods.

- Opens port

NodePort Service Exposing Port 80 → 30080

apiVersion: v1 kind: Service metadata: name: my-nodeport-service spec: type: NodePort selector: app: my-app ports: - port: 80 targetPort: 8080 nodePort: 30080 # must be in range 30000–32767- LoadBalancer:

- GKE automatically creates a Google Cloud external Load Balancer

- Assigns a public IP

- Traffic → LB → NodePort → Pod

- This is the standard way to expose a service publicly

LoadBalancer Service Exposing Port 80

apiVersion: v1 kind: Service metadata: name: my-loadbalancer-service spec: type: LoadBalancer selector: app: my-app ports: - port: 80 targetPort: 8080 - ClusterIP (default)

-

Ingress: Manages external access (layer-7 HTTP/HTTPS) routing mechanism and creates a Google Cloud External Application Load Balancer.

-

Container-Native Load Balancing: Uses Network Endpoint Groups (NEGs) to route traffic directly to pods.

5. Storage in GKE

In Kubernetes, Pods are ephemeral — they can be rescheduled, recreated, or moved to another node at any time. Stateful apps (databases, queues, caches, file‑based apps) need persistent storage that survives pod restarts.

That’s where Persistent Volumes (PV) and Persistent Volume Claims (PVC) come in.

- Persistent Volumes and Persistent Volume Claims: Managed storage for stateful applications.

- Storage Classes: Defines storage types (e.g., standard HDD, SSD, or Balanced PD).

- Hyperdisk: Support for Google Cloud Hyperdisk in 2026 for high-performance GKE workloads.

For more details see Persistant Disk

6. Connecting GKE Pod to Memorystore (standard)

To connect a GKE Pod to a Google Cloud Memorystore (Redis) instance, you need to ensure they share a network and then inject the connection details into your Kubernetes deployment.

Network Prerequisites

- Same VPC: The Redis instance and GKE cluster must be in the same VPC network and same region.

- VPC-Native GKE: Your GKE cluster must be VPC-native (IP Aliasing enabled). Standard route-based clusters cannot natively route traffic to the Google-managed VPC where Redis lives.

Find Connection Details

Once the Redis instance is created, retrieve its internal IP address and port from the Google Cloud Console or CLI:

- Host IP:

10.x.x.x - Port:

6379(Default) - Auth String: If Auth is enabled, you will also need the password string.

Store Credentials in Kubernetes

The best practice is to store these details in a Kubernetes Secret so they aren’t hardcoded in your application code.

kubectl create secret generic redis-creds \

--from-literal=REDIS_HOST=10.x.x.x \

--from-literal=REDIS_PORT=6379 \

--from-literal=REDIS_PASSWORD=your-auth-string

Update the GKE Deployment

Inject these values into your Pod as environment variables in your deployment.yaml.

spec:

containers:

- name: my-app

image: gcr.io/my-project/my-app:v1

env:

- name: REDIS_HOST

valueFrom:

secretKeyRef:

name: redis-creds

key: REDIS_HOST

- name: REDIS_PORT

valueFrom:

secretKeyRef:

name: redis-creds

key: REDIS_PORT

Verify Connectivity

You can test the connection by running a temporary “debug” pod with redis-cli installed:

kubectl run redis-test --rm -it --image=redis:7 -- \

redis-cli -h [YOUR_REDIS_IP] -p 6379 ping

# Expected Output: PONG

Note on Security: By default, Memorystore does not have a firewall. Use Kubernetes

NetworkPoliciesto restrict which Pods in your cluster are allowed to send egress traffic to the Redis IP address.

7. GKE Security

- Workload Identity: The recommended way for GKE workloads to access Google Cloud services.

Workload Identity lets GKE pods access Google Cloud APIs without service account keys. It maps a Kubernetes Service Account to a Google Cloud Service Account, giving pods secure, short‑lived credentials managed automatically by GKE and IAM.

- Binary Authorization: Ensures only trusted container images are deployed.

Binary Authorization ensures only trusted, verified container images can run in GKE. It enforces deploy‑time security by requiring signed attestations from approved build or security systems, blocking unapproved or unscanned images before they reach the cluster.

- RBAC: Manages permissions inside the cluster.

Role‑Based Access Control in Kubernetes controls who can do what in the cluster. It uses Roles/ClusterRoles to define permissions and RoleBindings/ClusterRoleBindings to assign them to users, groups, or service accounts. It provides fine‑grained, namespace‑scoped or cluster‑wide access control without exposing unnecessary privileges.

- IAM: Manages permissions outside the cluster (e.g., cluster creation).

- Shielded GKE Nodes: Provides node identity and integrity.

8. Essential gcloud and kubectl Commands

- Create a Cluster:

gcloud container clusters create [CLUSTER_NAME] --zone [ZONE] --num-nodes [NUMBER] - Get Credentials:

gcloud container clusters get-credentials [CLUSTER_NAME] --zone [ZONE] - Resize a Cluster:

gcloud container clusters resize [CLUSTER_NAME] --node-pool [POOL_NAME] --num-nodes [NEW_SIZE] - Deploy an Application:

kubectl apply -f [FILENAME.YAML] - Check Pod Status:

kubectl get pods

9. Exam Tips and Gotchas

- Control Plane Upgrade: Google automatically upgrades the Control Plane. Define Maintenance Windows and Exclusions.

- Preemptible/Spot VMs: Use for cost savings in fault-tolerant workloads.

- Autopilot vs Standard: Choose Autopilot for reduced operational overhead unless specific node customization is required.

10. External Links

Cloud Run: ACE Exam Study Guide (2026)

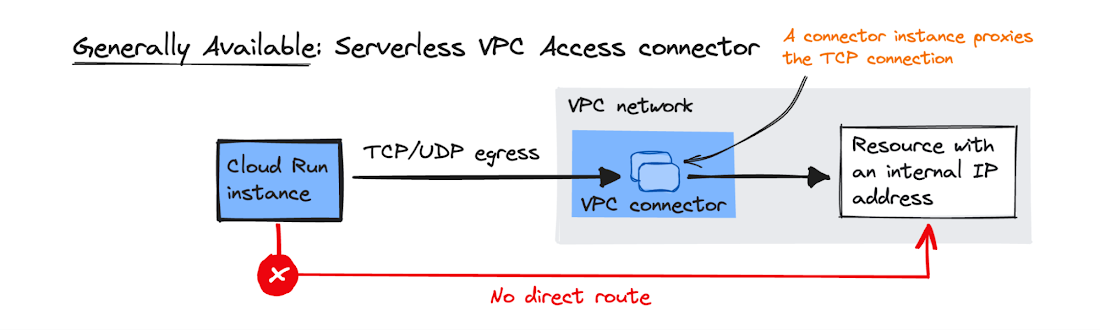

Image source: Google Cloud Documentation

1. Cloud Run Overview

Cloud Run is a fully managed, serverless compute platform that enables you to run containerized applications that are stateless, request-driven. It is built on Knative, an open-source standard for serverless.

- Key Characteristics

- Serverless: No infrastructure to manage. It scales automatically based on incoming requests.

- Scale to Zero: If there is no traffic, Cloud Run scales down to zero instances.

- Stateless: Containers must be stateless. Persistent data should be stored in Cloud Storage, Filestore, or a database.

- Concurrency: Cloud Run can handle multiple concurrent requests per container instance (default is 80, up to 1000).

Knative Framework

Knative is an open‑source framework that brings serverless capabilities to Kubernetes by providing standardized components for building, deploying, and running containerized applications.

It abstracts complex Kubernetes operations and adds features such as automatic scaling (including scale‑to‑zero), traffic management, revisioning, and event‑driven execution through CloudEvents.

Knative consists of two main parts: Serving, which handles request‑driven workloads, and Eventing, which manages event routing and triggers. Cloud Run is built directly on Knative Serving, offering a fully managed version of its core serverless functionality.

2. Deployment & Sidecars (2026)

- Methods:

- Deploy from Container Image: Provide a URL to an image in Artifact Registry.

- Deploy from Source: Cloud Run uses Cloud Build to automatically create an image and deploy it.

- Sidecar Containers: Support for multiple containers in a single pod.

- Use Case: Running a Cloud SQL Auth Proxy alongside your app to handle database connections securely.

- Use Case: Running a logging or monitoring agent (e.g., OpenTelemetry) without modifying the main app code.

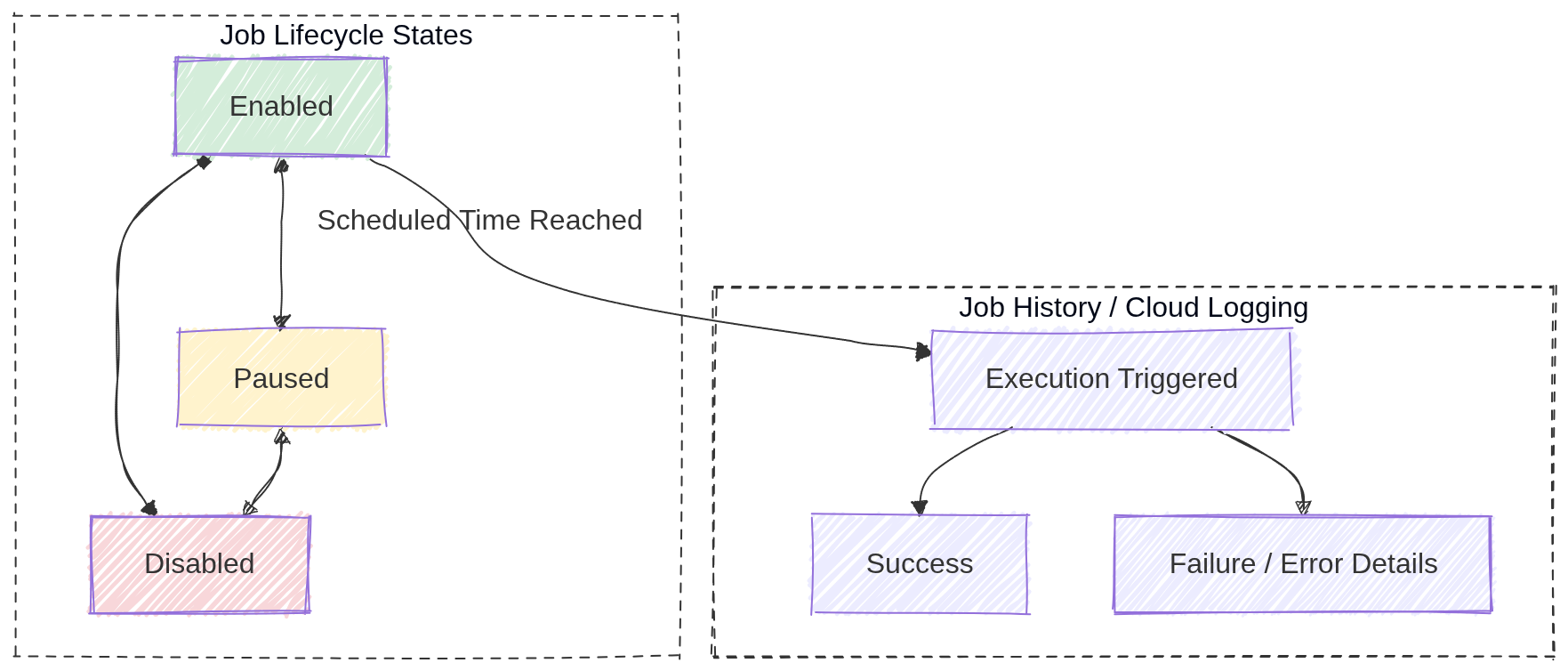

- Jobs vs. Services:

- Cloud Run Services: For code that handles requests (HTTP/gRPC).

- e.g. a Spring Boot application handling REST calls.

- Cloud Run Jobs: For code that performs work (data processing, backups) and exits when finished.

- e.g. a Spring Boot application with a

CommandLineRunner(see interface JavaDoc).

- e.g. a Spring Boot application with a

- Cloud Run Services: For code that handles requests (HTTP/gRPC).

3. Revisions and Traffic Management

- Revisions: Every time you deploy a change, Cloud Run creates a new immutable revision.

- Traffic Splitting: Simultaneously route percentages of traffic to different versions (e.g., 50/50 for A/B testing or 1% for Canary testing).

- Tagging: Assign a specific URL to a revision for testing before routing main traffic.

- Rollbacks: Instantly roll back to a previous revision by shifting 100% of traffic.

Deployment Strategies

Blue‑Green Deployment

Two identical environments exist: Blue (current) and Green (new).

- Deploy the new version to the Green environment.

- Test Green without affecting users.

- Switch 100% of traffic from Blue → Green in one action.

- Rollback is instant by switching traffic back to Blue.

Use cases: zero‑downtime releases, fast rollback, predictable behavior.

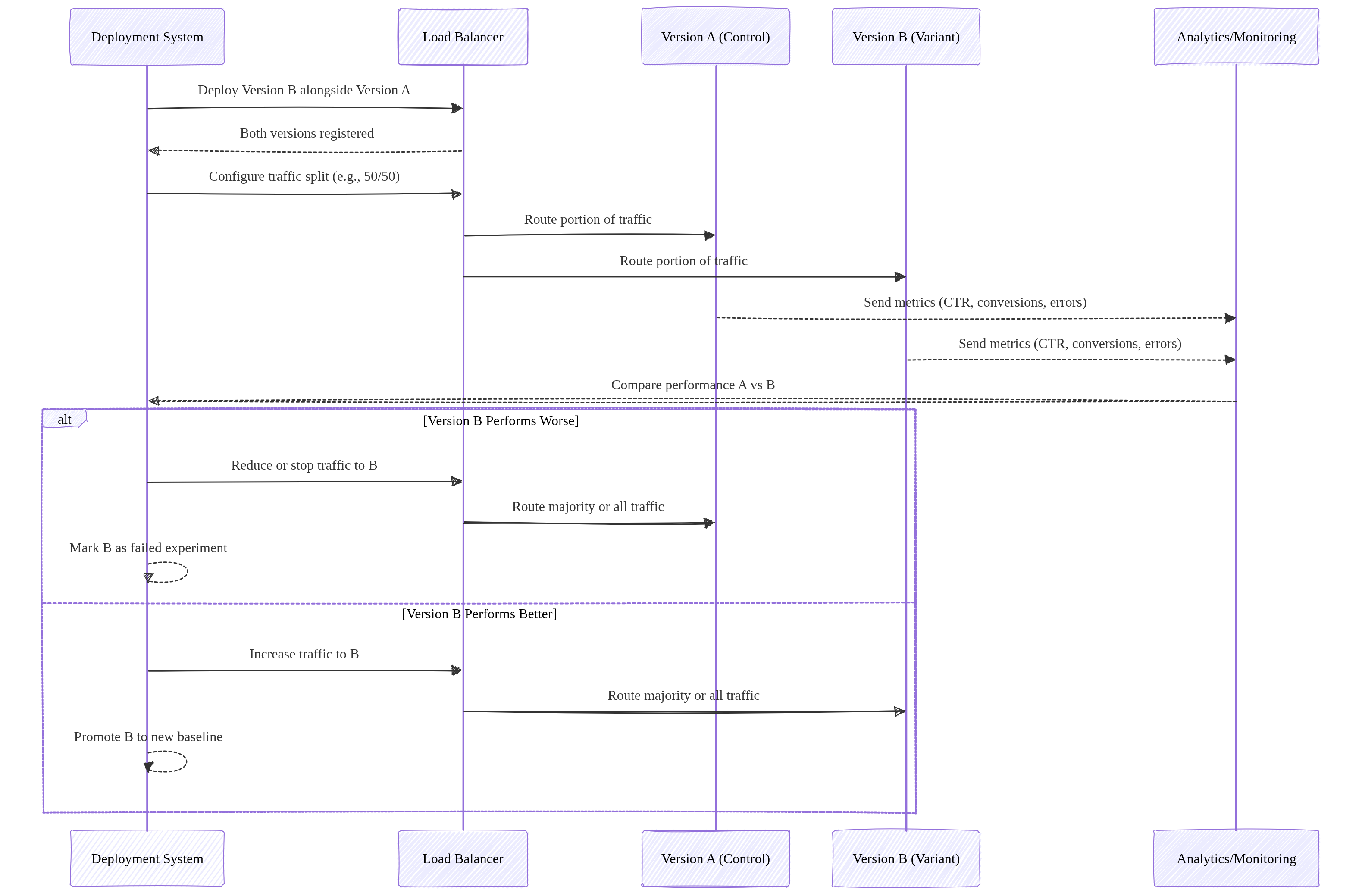

A/B Testing

Two versions run simultaneously, each receiving a portion of traffic.

- Version A = baseline.

- Version B = experimental variant.

- Users are split (e.g., 50/50 or 90/10).

- Compare metrics: conversions, latency, errors, user behavior.

Purpose: Experimentation and data‑driven decision‑making.

Traffic behavior: Parallel traffic to both versions for comparison.

Image source: Own work (Mermaid diagram).

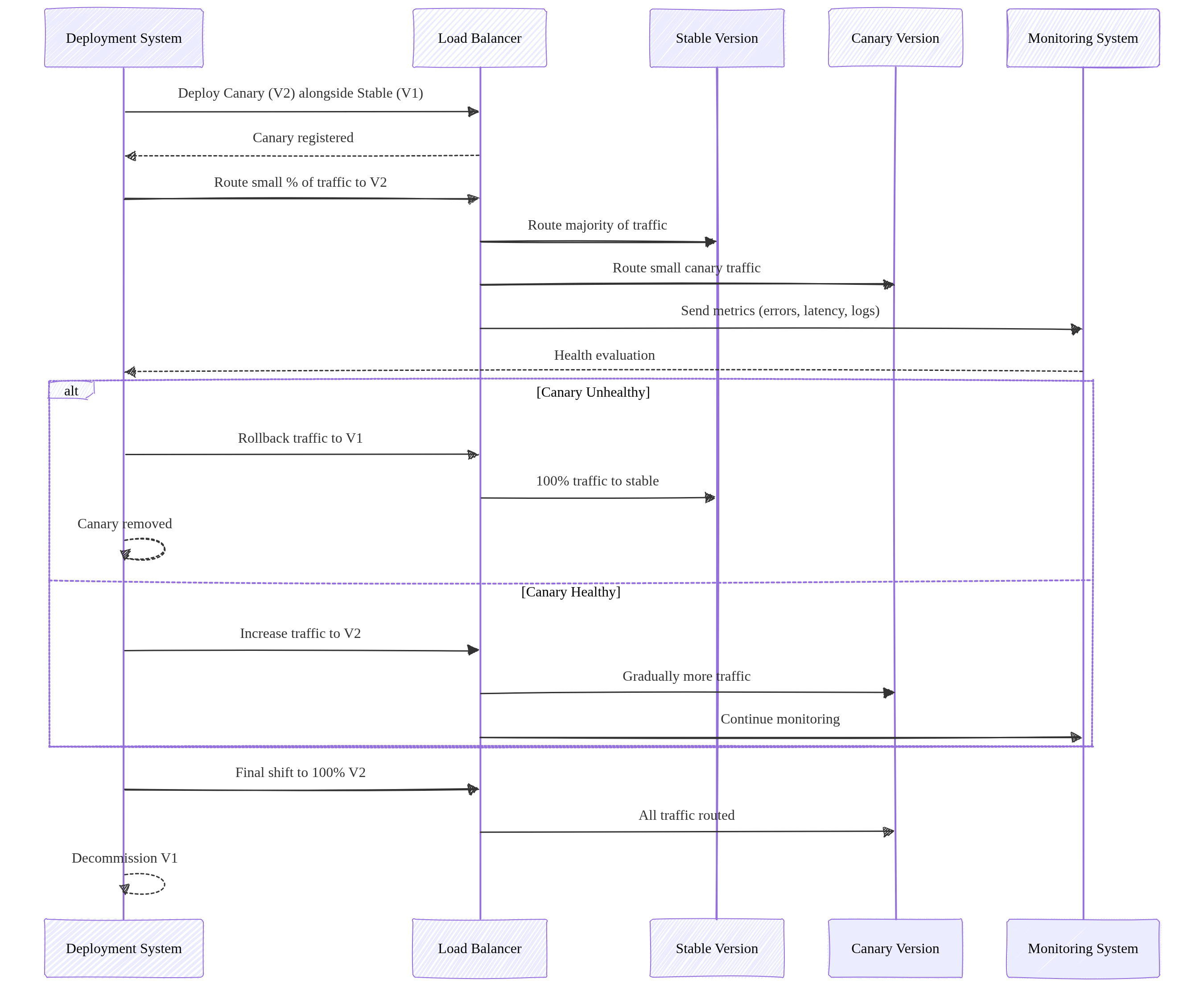

Canary Deployment

Gradually roll out a new version to a small subset of users.

- Start with a small percentage (e.g., 1%).

- Monitor errors, latency, logs.

- Increase traffic gradually (e.g., 1% → 10% → 50% → 100%).

- Rollback by shifting traffic back to the stable version.

Use cases: risk‑reduction, real‑world testing, incremental rollout.

Image source: Own work (Mermaid diagram).

Rolling Update Deployment

A rolling update replaces application instances gradually, updating a few replicas at a time until the entire fleet runs the new version.

- New version is deployed in small batches (e.g., 1 pod at a time).

- Each new instance must pass readiness checks before receiving traffic.

- Old instances are terminated only after new ones become healthy.

- Traffic is continuously served throughout the process — zero downtime.

- Rollback is performed by reversing the rollout (deploying the previous version again), but it is slower than Blue‑Green.

Purpose: Safe, incremental rollout without requiring two full environments.

Traffic behavior: Traffic is always routed to a mix of old and new instances during the transition.

Rolling updates require strict backward compatibility because old and new versions run simultaneously. Breaking API changes cause runtime failures. Use versioning, tolerant readers, and the expand‑migrate‑contract pattern to safely evolve APIs.

Summary

- Blue‑Green Two full environments. Switch traffic all at once. Best for fast rollback.

- A/B Testing: Runs two versions in parallel to compare user behavior and performance metrics for data‑driven decisions.

- Canary: Gradual traffic shifting. Best for testing new versions with minimal risk.

- Rolling Update: Gradual replacement of old instances with new ones. Zero downtime, no duplicate environments, slower rollback than Blue‑Green but simpler and resource‑efficient.

4. Scaling, Resources & Probes

- Maximum Instances: Limits how far the service can scale up (prevents runaway costs).

- Minimum Instances: Keeps instances “warm” to eliminate cold start latency.

- CPU Allocation & Throttling

- Throttled (Default): CPU is only allocated during request processing. Once the response is sent, CPU is heavily “throttled” (reduced), which can cause background threads or asynchronous tasks to hang or fail.

- Always Allocated: CPU is available even when no requests are being processed. This is required for background tasks, WebSocket-like connections, or monitoring agents that need to run continuously.

- Probes (Health Checks)

- Startup Probe: Checks if the app is ready to serve traffic (prevents 503 errors during scale-up).

- Liveness Probe: Restarts the container if it becomes unhealthy or hangs.

- In Spring Boot this is achieved with an Actuator.

- GPU Support: Cloud Run now supports GPU acceleration for AI/ML inference workloads.

5. Networking and Ingress

- Ingress Settings:

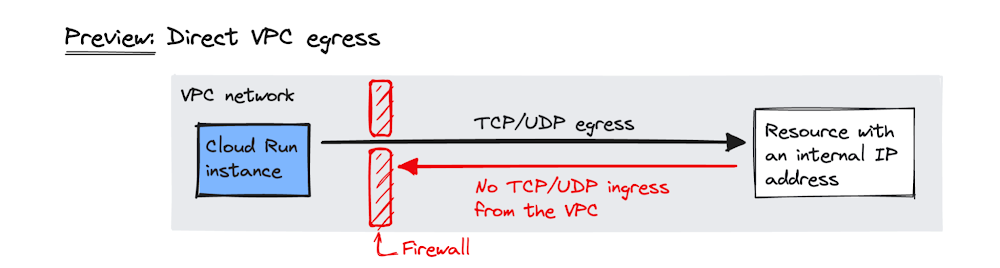

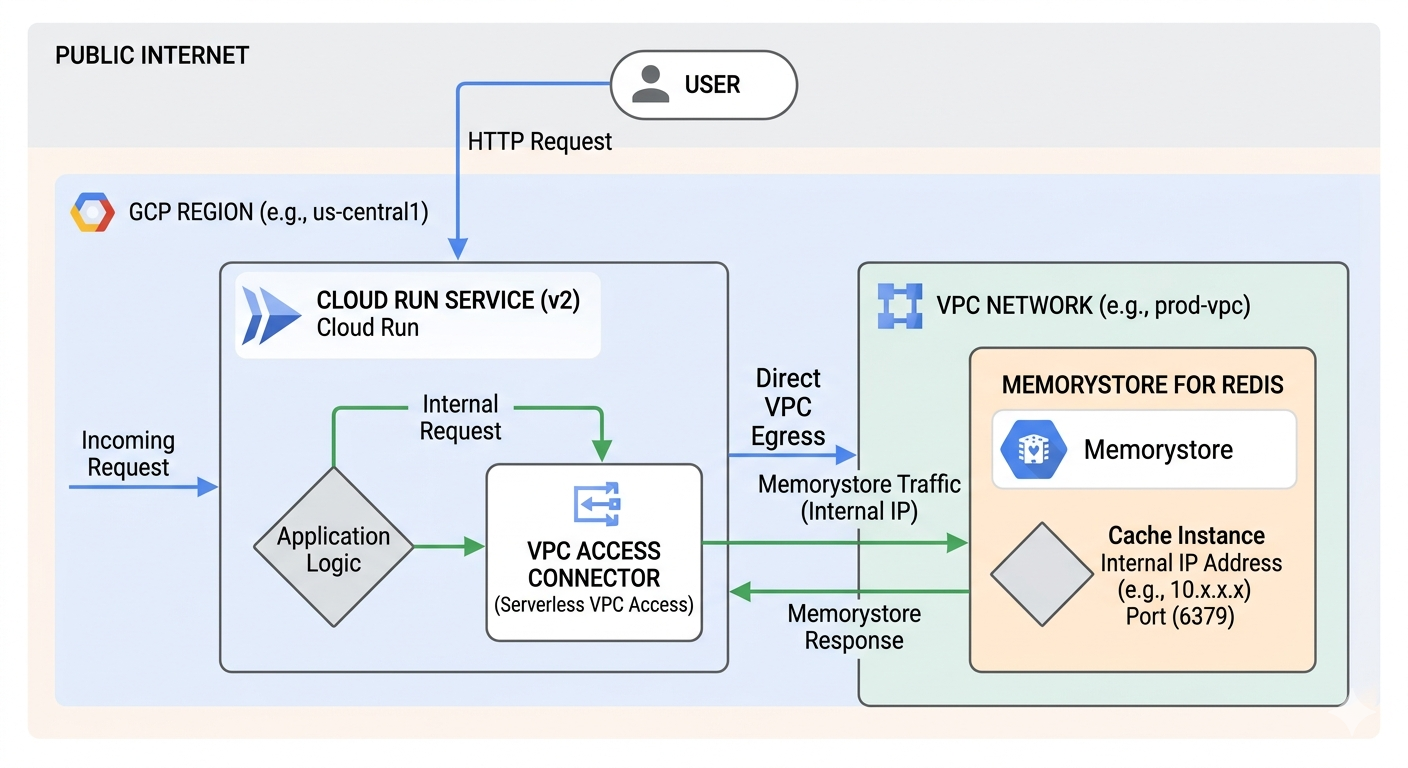

All(Public),Internal(VPC only), orInternal and Cloud Load Balancing. - Direct VPC Egress: A faster, more direct way to connect to a VPC without requiring a Serverless VPC Access Connector.

- Static Outbound IP: Route traffic through a VPC and use Cloud NAT to give your service a fixed external IP.

6. Security and Authentication

- IAM Roles:

roles/run.invoker: Required to call/trigger a service.roles/run.admin: Full control over services and revisions.

- Service Account: Always assign a Custom Service Account with minimal permissions for production.

- Private Authentication (Critical):

- To call a private Cloud Run service, the requester must provide a Google-signed ID Token (not an Access Token).

curl --header "Authorization: Bearer $(gcloud auth print-identity-token)" [SERVICE_URL]

- To call a private Cloud Run service, the requester must provide a Google-signed ID Token (not an Access Token).

- Secrets: Use Secret Manager to mount sensitive data as environment variables or volumes.

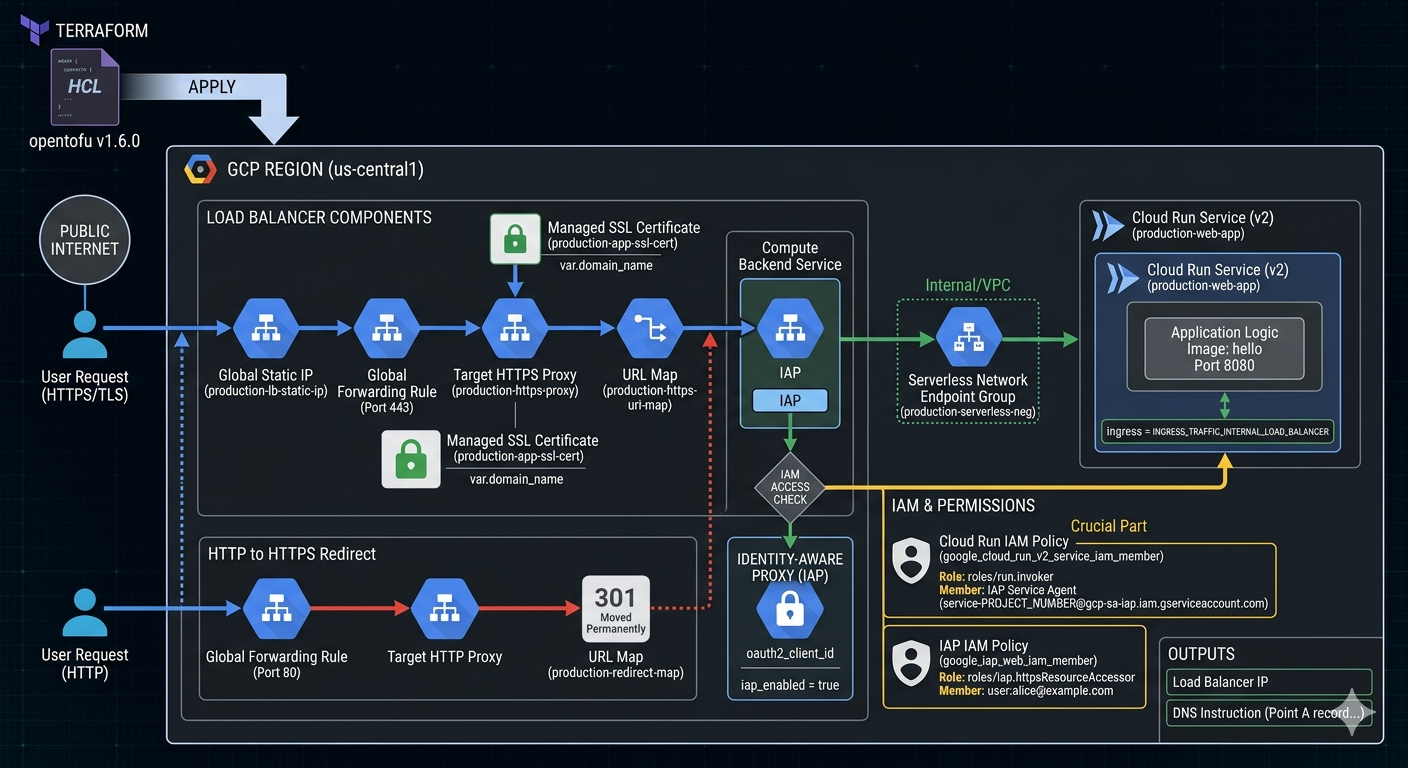

6.1. Identity-Aware Proxy (IAP)

- IAP (Identity-Aware Proxy) adds an authentication layer in front of Cloud Run services, requiring users or apps to authenticate via Google Identity before accessing the service.

- How it works:

- IAP sits between the user and the Cloud Run service.

- All traffic passes through IAP, which validates the user’s identity (Google Account, OAuth 2.0).

- Only authenticated and authorized users can reach the backend service.

- The (Load Balancer’s) Backend Service receives requests with an

x-goog-authenticated-user-emailheader containing the user’s email.

- Key Benefits:

- Enforces authentication at the edge — no code changes needed.

- Integrates with IAM for fine-grained access control (grant/deny per user or group).

- Works with Cloud Load Balancing (HTTP/HTTPS).

- Use Cases:

- Internal tools requiring Google-only access.

- Adding an extra auth layer beyond IAM.

- Protecting services that don’t have built-in authentication.

For a usecase see Cloud Run & IAP.

7. Storage Options

- In-memory: Ephemeral filesystem limited by allocated RAM.

- Cloud Storage FUSE: Mount a Cloud Storage bucket as a local volume (best for large media files).

- NFS (Filestore): Use VPC egress to mount a Filestore instance for high-performance shared POSIX storage.

8. Common ACE Exam Scenarios

- Scenario: Call a private service from a local script? → Use

gcloud auth print-identity-tokento get a bearer token. - Scenario: Prevent Cold Start for a critical API? → Set

min-instancesto at least 1. - Scenario: Connect to Cloud SQL securely without hardcoded IPs? → Use a Sidecar with the Cloud SQL Auth Proxy.

- Scenario: Your application starts a background thread to process an image after sending the HTTP response, but the process never completes or runs extremely slowly. → Change CPU Allocation to “always allocated” to prevent CPU throttling after the request is returned to the user.

- Scenario: Deploy a background task that runs for 2 hours? → Use Cloud Run Jobs (not Services).

By default, each task runs for a maximum of 10 minutes: you can change this to a shorter time or a longer time up to 168 hours (7 days). For tasks using GPUs, the maximum available timeout is 1 hour.

- Scenario: Split traffic 10/90 for a new feature? → Use Traffic Splitting across revisions.

- Scenario: Mount a 1TB shared drive for multiple instances? → Use Filestore via Direct VPC Egress.

9. Essential gcloud Commands

- Deploy from Image:

gcloud run deploy [SERVICE] --image [IMAGE_URL] - Update Traffic:

gcloud run services update-traffic [SERVICE] --to-revisions [REV1=10,REV2=90] - Set CPU Allocation (Throttling):

gcloud run services update [SERVICE] --no-cpu-throttling(always on) or--cpu-throttling(default) - List Revisions:

gcloud run revisions list --service [SERVICE] - Describe Service:

gcloud run services describe [SERVICE]

Final ACE Tip: Cloud Run is the preferred choice for modern, containerized microservices that need to scale to zero. Use Sidecars for infrastructure logic and ID Tokens for private service-to-service communication.

10. External Links

- Cloud Run - Google Cloud Documentation

- Youtube - Andrew Brown - Cloud Run

- Cloud Run - The Cloud Girl

- Where should I run my staff - The Cloud Girl

- Google Cloud Documentation - Canary Deployment

- Blue-Green, Canary and Other K8s Deployment Strategies - Traefik Labs

- Most Common Kubernates Deployments Strategies (Example & Code) - Anton Putra - Youtube

Cloud Functions: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. Cloud Functions Overview

Cloud Functions is a serverless, event-driven compute platform for executing snippets of code in response to events.

- Key Characteristics

- Serverless: No infrastructure management; automatic scaling.

- Single-purpose: Best for small, independent units of logic.

- Ephemeral: Instances are created, perform work, and are destroyed.

- Generations (2nd Gen is now the Default)

- 2nd Generation (Built on Cloud Run)

- Uses Eventarc as the unified eventing engine.

- Higher concurrency (up to 1000 requests per instance).

- Longer processing times (up to 60 minutes for HTTP).

- Larger instance sizes (up to 16GB RAM / 4 vCPUs) and support for C4 machine types.

- Traffic splitting between revisions.

- 1st Generation: Legacy model, limited concurrency (1 request per instance).

- 2nd Generation (Built on Cloud Run)

2. Triggers and Events (via Eventarc)

In 2nd Generation, Cloud Functions use Eventarc to deliver events from over 90+ Google Cloud sources.

- HTTP Triggers: Triggered via a direct URL (standard for webhooks or simple APIs).

- Event-Driven Triggers:

- Cloud Storage: Triggered by file creation, deletion, or metadata updates.

- Pub/Sub: Triggered when a message is published to a specific topic.

- Firestore: Triggered by document creation, updates, or deletions.

- Cloud Logging: Triggered by specific log entries (via Eventarc).

3. Runtimes and Deployment

- Supported Languages: Node.js, Python, Go, Java, Ruby, PHP, .NET Core.

- Deployment Source:

- Local machine via

gcloud. - Source repositories (GitHub, Bitbucket).

- Cloud Storage (ZIP file).

- Local machine via

- Cloud Build: When you deploy, Cloud Build automatically packages the function and stores it as a container image in Artifact Registry.

4. Scaling and Performance

- Max Instances: Limits scaling to prevent excessive costs.

- Min Instances: Keeps instances warm to eliminate cold start latency.

- Startup CPU Boost (2026): Temporarily allocates extra CPU during function startup to reduce cold start time — a cost-effective alternative to min-instances.

- Concurrency (2nd Gen Only): Allows a single instance to handle multiple simultaneous requests, reducing the total number of instances needed.

- Timeout: The maximum time a function can run before being terminated.

5. Networking

- Ingress Settings: Control whether the function is public or internal-only.

- VPC Access:

- Direct VPC Egress (Recommended for 2nd Gen): Faster, lower latency, and no connector overhead.

- Serverless VPC Access Connector: Required for 1st Gen or specific VPC requirements.

- Static Outbound IP: Requires a VPC Connector and Cloud NAT.

6. Security and IAM

- Permissions:

roles/cloudfunctions.invoker: Allows calling/triggering the function.roles/cloudfunctions.admin: Full control over functions.

- Service Accounts:

- Runtime Service Account: The identity the function uses when it runs (default is the App Engine default service account).

Best practice: Use a Custom Service Account with minimal permissions.

- Runtime Service Account: The identity the function uses when it runs (default is the App Engine default service account).

- Secrets: Integrate with Secret Manager to securely provide API keys or credentials.

7. Monitoring and Logging

- Cloud Logging: All

stdoutandstderroutput is automatically sent to Cloud Logging. - Error Reporting: Automatically captures unhandled exceptions.

- Cloud Monitoring: Tracks execution counts, execution times, and memory usage.

8. Essential gcloud Commands

- Deploy (HTTP):

gcloud functions deploy [NAME] --gen2 --runtime [RUNTIME] --trigger-http --allow-unauthenticated - Deploy (Pub/Sub):

gcloud functions deploy [NAME] --gen2 --runtime [RUNTIME] --trigger-topic [TOPIC_NAME] - List Functions:

gcloud functions list - Check Logs:

gcloud functions logs read [NAME] - Describe Function:

gcloud functions describe [NAME]

9. Java Cloud Functions – Required Interfaces

To write a Cloud Function in Java, you must implement one of Google’s functional interfaces. These are part of the Cloud Functions Framework, which allows you to run and test these functions locally or in any Knative-compatible environment.

dependencies {

implementation platform("com.google.cloud:libraries-bom:26.79.0")

implementation "com.google.cloud:google-cloud-functions"

}

Cloud Functions doesn’t run on Knative directly, but uses the Knative‑compatible Functions Framework, allowing the same function code to run on Cloud Run or any Knative environment.

For HTTP-triggered functions

com.google.cloud.functions.HttpFunction

public class HelloHttp implements HttpFunction {

@Override

public void service(HttpRequest request, HttpResponse response) throws Exception {

BufferedWriter writer = response.getWriter();

writer.write("Hello from HTTP Function!");

}

}

For background (event-driven) functions

com.google.cloud.functions.BackgroundFunction<T>

public class HelloBackground implements BackgroundFunction<PubSubMessage> {

@Override

public void accept(PubSubMessage message, Context context) {

var data = message.data();

System.out.println("Received Pub/Sub message: " + data);

}

}

// Simple POJO for Pub/Sub payload

record PubSubMessage(String data) {

}

For raw event payloads

com.google.cloud.functions.RawBackgroundFunction

public class HelloRawBackground implements RawBackgroundFunction {

@Override

public void accept(String json, Context context) {

System.out.println("Raw event payload: " + json);

}

}

These interfaces define the entry point that Google Cloud invokes when your function runs.

10. Exam Tips & Comparison

- Cloud Functions vs. Cloud Run

- Use Cloud Functions for event-driven snippets or simple glue logic.

Glue logic is small, simple code that connects components so they can work together. It adapts interfaces, transforms data, or coordinates calls between modules, acting as the plumbing that lets otherwise incompatible parts interoperate.

- Use Cloud Run for full web applications, containers with multiple routes, or complex dependencies.

- Use Cloud Functions for event-driven snippets or simple glue logic.

- Cold Starts: Occur when a new instance is spun up from zero. Mitigated by setting a

min-instancesvalue. - Idempotency: Event-driven functions should be idempotent to handle retries correctly.

Idempotency - An operation is idempotent if performing it multiple times produces the same result as performing it once.

11. External Links

- Cloud Run Functions - Google Cloud Documentation

- Youtube - Andrew Brown - Cloud Run

- Cloud Functions - The Cloud Girl

- Where should I run my staff - The Cloud Girl

App Engine: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. App Engine Overview

App Engine is a fully managed Platform as a Service (PaaS) for building and deploying web applications and APIs.

- Key Characteristics

- Serverless: No server management; automatic scaling.

- Application-Centric: Focus on code, not infrastructure.

- Regional Resource: An App Engine application is created within a specific region and cannot be moved once created.

- Max One App per Project: You can only have one App Engine application per Google Cloud project.

2. Standard vs. Flexible Environment

This is the most frequent exam topic for App Engine.

2.1. Standard Environment

- Speed: Starts in seconds. Scale-to-zero is supported.

- Infrastructure: Runs in sandboxed environments (specific versions of Node.js, Python, Java, Go, PHP, Ruby).

- Instance Classes: M1 (high memory), M2 (high CPU), F1-F4 (default). Determines CPU/memory ratio.

Class Memory CPU Cost F1 256MB 600MHz Cheapest F2 512MB 1.2GHz F4 1GB 2.4GHz M1 1GB 600MHz High memory M2 2GB 1.2GHz High memory - Constraints: Cannot modify OS; write-only to local filesystem (

/tmp). No SSH access. - Cost: Cheaper for intermittent traffic; scale-to-zero saves money.

- Best For: Web apps, APIs with varying traffic, rapid development.

2.2. Flexible Environment

- Speed: Starts in minutes (uses Compute Engine VMs). No scale-to-zero.

- Infrastructure: Runs in Docker containers. Any language/version supported.

- Machine Types: Uses custom machine types (not N4/C4 - those are Compute Engine).

- Capabilities: Modify OS, access filesystem, SSH access.

- Health Checks:

readiness_check: When to route traffic to instanceliveness_check: When to restart unhealthy instance

- Connectivity: Easier VPC access than Standard.

- Cost: More expensive; min 1 instance always running.

- Best For: Apps with consistent traffic, custom dependencies, high CPU/memory needs.

3. App Engine Hierarchy

Understanding the relationship between components is essential for resource management.

- Project: The root Google Cloud resource.

- Application: The App Engine app within the project (one per project).

- Service: Microservices within the app (e.g., “frontend”, “api”, “worker”).

- Version: Different versions of a service (e.g., “v1”, “v2”).

- Instance: The actual running units of a version.

4. Scaling Types

| Type | Standard | Flexible | Description |

|---|---|---|---|

| Automatic | Yes | Yes | Based on CPU, throughput, latency targets |

| Basic | Yes | No | On-demand; scale to zero when idle |

| Manual | Yes | Yes | Fixed instance count |

4.1. Automatic Scaling Parameters

automatic_scaling:

target_cpu_utilization: 0.6 # Scale when CPU > 60%

target_throughput_concurrent_requests: 100 # Or use this

min_instances: 0 # Standard: 0 allows scale-to-zero

max_instances: 10

4.2. Basic Scaling Parameters

basic_scaling:

max_instances: 5

idle_timeout: 60s # Shutdown after idle

5. Traffic Management

- Traffic Migration - Gradually shifts all traffic from one version to another. Useful for controlled rollouts, such as moving traffic from

v1tov2without an abrupt cutover. - Traffic Splitting - Routes live traffic to multiple versions at the same time. Common use cases include A/B testing (e.g., 50/50 split), Canary releases (e.g., 1% to a new version), and progressive rollouts with real user traffic.

- Methods - App Engine can distribute traffic using:

- IP-based splitting — consistent routing for users behind the same IP.

gcloud app services set-traffic my-service \ --splits v1=0.9,v2=0.1 \ --split-by ip - Cookie-based splitting — sticky sessions (per user) for experiments or A/B tests. App Engine uses the

GOOGAPPUIDcookie.gcloud app services set-traffic my-service \ --splits v1=0.5,v2=0.5 \ --split-by cookie - Random splitting — evenly distributed, non-sticky traffic.

gcloud app services set-traffic my-service \ --splits v1=0.99,v2=0.01 \ --split-by random

- IP-based splitting — consistent routing for users behind the same IP.

6. Deployment and Configuration

app.yaml: The core configuration file used for deployment. Defines runtime, scaling, handlers, and more.

6.1. Standard Environment Example

runtime: python312

instance_class: F2

automatic_scaling:

min_instances: 1

max_instances: 10

target_cpu_utilization: 0.7

env_variables:

ENV_NAME: "production"

handlers:

- url: /static

static_dir: static_files

- url: /.*

script: auto

warmup: enabled

6.2. Flexible Environment Example

runtime: java21

env: flex

automatic_scaling:

min_num_instances: 1

max_num_instances: 5

resources:

cpu: 1

memory_gb: 2

disk_size_gb: 10

readiness_check:

path: /ready

check_interval_sec: 5

- Deployment: Use

gcloud app deploy. By default, this promotes the new version to handle 100% of traffic. Use--no-promoteto deploy without switching traffic.

7. Networking and Security

- App Engine Firewall: Control access by IP range (Allow or Deny).

- IAP (Identity-Aware Proxy): Restrict access based on IAM identities without modifying application code.

- VPC Access: Use a Serverless VPC Access Connector to reach resources with private IPs (Cloud SQL, Memorystore).

- Flexible has easier VPC connectivity than Standard.

- Service Accounts:

- Default: App Engine Default Service Account (

project-id@appspot.gserviceaccount.com) with broad Editor permissions. - Best Practice: Create a custom service account with least-privilege permissions.

- Use

--service-account=YOUR-SA@PROJECT.iam.gserviceaccount.comin deployment.

- Default: App Engine Default Service Account (

- Security Best Practices:

- Never use the default service account in production

- Use IAP for user authentication

- Leverage firewall rules for IP-based access control

- Store secrets in Secret Manager, not in

app.yaml

8. Essential gcloud Commands

| Command | Description |

|---|---|

gcloud app create --region [REGION] | Initialize App Engine in a region |

gcloud app deploy [YAML_FILE] | Deploy application |

gcloud app deploy --no-promote | Deploy without shifting traffic |

gcloud app services set-traffic [SERVICE] --splits [V1=0.5,V2=0.5] | Split traffic |

gcloud app browse | Open app in browser |

gcloud app logs read | View application logs |

gcloud app versions list | List all versions |

gcloud app services list | List all services |

gcloud app instances list | List running instances |

9. When to Use App Engine vs Alternatives

| Use Case | Recommended Service |

|---|---|

| Traditional web apps, simple deployments | App Engine Standard |

| Containerized microservices, scale-to-zero | Cloud Run |

| Full Kubernetes control | GKE |

| Long-running processes, custom hardware | Compute Engine |

| App Engine Standard features + custom deps | App Engine Flexible |

9.1. App Engine vs Cloud Run Quick Reference

| Feature | App Engine | Cloud Run |

|---|---|---|

| Scale to zero | Standard only | Yes |

| Container support | Flexible only | Yes (primary) |

| Managed SSL | Yes | Yes |

| VPC access | Via connector | Native (Knative) |

| Warmup requests | Yes | No (cold starts) |

| Minimum cost | $0 (Standard) | ~$0 with scale-to-zero |

10. Cost Optimization Tips

- Use Standard environment for intermittent traffic (scale-to-zero)

- Set appropriate

min_instancesonly when cold start latency is critical - Choose correct instance classes (F1-F4 vs M1-M2) based on your memory/CPU needs

- Use

target_cpu_utilizationinstead of throughput for more efficient scaling - Deploy with

--no-promotewhen testing to avoid unnecessary traffic - Delete unused versions after migration

11. Exam Tips & Common Pitfalls

- Region Lock: Cannot change region after creation; must create new project.

- Always deploy new versions for major changes to enable instant rollbacks.

- Warmup requests reduce cold start latency (Standard environment).

- Flexible requires at least 1 instance - no scale-to-zero, factor this into cost.

- Handlers order matters - first matching handler wins.

- Static files must be served via handlers, not your application code.

- App Engine API: Use

google.appengine.applicationfor programmatic scaling config.

12. External Links

- App Engine - Google Cloud Documentation

- App Engine Standard - Google Cloud

- App Engine Flexible - Google Cloud

- Serverless VPC Access

- Youtube - Andrew Brown - App Engine

- App Engine - The Cloud Girl

Storage & Databases

Image source: Google Cloud Documentation

Cloud Storage

Scalable object storage for unstructured data (images, backups, logs). Features multiple storage classes (Standard, Nearline, Coldline, Archive) and automated lifecycle management.

Cloud SQL

Fully managed relational database (RDBMS) for MySQL, PostgreSQL, and SQL Server. Best for standard web applications and transactional (OLTP) workloads at a regional scale.

Cloud Spanner

Enterprise-grade, globally distributed relational database. Provides horizontal scalability for both reads and writes with strong global consistency and up to 99.999% availability.

Firestore / Datastore

Serverless, NoSQL document database built for mobile, web, and IoT apps. Supports real-time synchronization, offline data access, and ACID transactions at the document level.

Bigtable

High-performance, fully managed NoSQL wide-column database. Designed for petabyte-scale, low-latency workloads such as IoT telemetry, ad-tech, and financial data.

BigQuery

Serverless, cost-effective enterprise data warehouse (EDW) for OLAP analytics. Uses a columnar architecture to query petabytes of data using standard SQL.

Memorystore

Fully managed in-memory data store service for Redis, Valkey, and Memcached. Used for sub-millisecond latency caching, session management, and real-time analytics.

Filestore

Managed NFS file storage for applications that require a POSIX-compliant shared filesystem. Commonly used as shared storage for GKE pods and Compute Engine VMs.

Persistent Disk

Persistent Disk is durable, high‑performance block storage for VM instances. It’s replicated for reliability, supports snapshots, resizing, and can detach/reattach across VMs.

Cloud Storage: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. Cloud Storage Overview

Cloud Storage is Google Cloud’s object storage service for storing unstructured data such as files, images, backups, and static website assets.

- Characteristics:

- Global Namespace: Bucket names must be globally unique across all of Google Cloud.

- Durability: Designed for 99.999999999% (11 nines) annual durability.

- Consistency: Provides strong global consistency for all operations (read-after-write, list-after-write).

2. Bucket Locations

- Regional: Stored in a single region. Lowest latency for compute in the same region.

- Dual-Region: Stored in two specific regions for high availability (99.99%) and disaster recovery.

- Multi-Region: Stored across large geographic areas (e.g., US, EU) for global content distribution.

3. Storage Classes

| Storage Class | Use Case | Min Duration |

|---|---|---|

| Standard | Hot data, frequent access | None |

| Nearline | Access ~once per month | 30 days |

| Coldline | Access ~once per quarter | 90 days |

| Archive | Rare access, long-term storage | 365 days |

Autoclass (2026 Standard): Automatically moves objects to colder storage classes based on access patterns to optimize costs without manual intervention.

4. Access Control

- IAM (Recommended): Controls access at the bucket or project level.

- Uniform Bucket-Level Access (UBLA): Recommended for most use cases. It disables ACLs entirely and relies solely on IAM for better security management.

- ACLs (Legacy): Provides object-level permissions but is harder to manage at scale.

- Signed URLs: Provide temporary access to a specific object without requiring a Google account for the recipient. Perfect for sharing private content via a link.

5. Object Versioning and Lifecycle Management

- Object Versioning: Keeps old versions of objects to protect against accidental deletions or overwrites.

- Lifecycle Rules: Automate actions such as moving objects to a cheaper storage class or deleting them.

- Common Conditions:

Age(days),CreatedBefore(date),IsLive(true/false),MatchesStorageClass.

- Common Conditions:

- Soft Delete (2026 Standard): A bucket-level setting that allows you to recover deleted objects for a configurable retention period (default 7 days) even after they are deleted.

6. Retention Policies and Holds

- Retention Policy: Ensures objects are not deleted or overwritten for a specific duration.

- Bucket Lock: Once a retention policy is locked, it cannot be removed or shortened.

- Object Holds: Prevents deletion of specific objects for legal or event-based reasons.

7. Encryption

- Google-Managed Keys: The default encryption for all data at rest.

- Customer-Managed Encryption Keys (CMEK): Keys stored in Cloud KMS. The KMS key must be in the same region as the bucket.

- Customer-Supplied Encryption Keys (CSEK): You provide the raw key with each request.

Data is always encrypted at rest in Cloud Storage.

8. Data Migration Tools

gcloud storage: The modern, multi-threaded CLI for interacting with Cloud Storage (replacesgsutil).gsutil: Legacy tool, still functional but slower thangcloud storage.- Storage Transfer Service: Move data from AWS S3, Azure, or other GCS buckets.

- Transfer Appliance: A physical device for massive data migration (petabytes).

9. Performance and Triggers

- Resumable Uploads: Allows you to resume an upload after a communication failure. Recommended for files > 10MB or unstable networks.

- Parallel Composite Uploads: The

gcloud storageCLI automatically splits large files into chunks, uploads them in parallel, and “composes” them into one final object. This significantly increases speed for large files. - Combined Approach: The modern

gcloud storage cpcommand combines both—it uploads chunks in parallel, and each individual chunk upload is resumable, ensuring both high speed and reliability for massive files. - Requester Pays: The person accessing the data pays the egress costs instead of the bucket owner.

- Cloud Storage Triggers: GCS can trigger Cloud Functions or Cloud Run jobs immediately after an object is created, deleted, or archived (via Pub/Sub notifications).

10. Common ACE Exam Scenarios

- Scenario: Upload a 100GB file over an unstable connection? → Use Resumable Uploads.

- Scenario: Speed up the upload of a 1TB file? → Use Parallel Composite Uploads (via

gcloud storage). - Scenario: Process a file as soon as it’s uploaded? → Use Cloud Storage Triggers (GCS → Pub/Sub → Cloud Functions).

- Scenario: Automatically reduce costs for old data? → Use Lifecycle Rules or Autoclass.

- Scenario: Avoid ACL complexity? → Enable Uniform Bucket-Level Access.

- Scenario: High Availability for a single region? → Use Dual-Region.

- Scenario: Protect against “fat-finger” accidental deletion? → Enable Soft Delete or Object Versioning.

- Scenario: Give a non-GCP user temporary access to a file? → Use a Signed URL.

11. External Links

- Cloud Storage - The Cloud Girl

- Which Storage Should I Use - The Cloud Girl

- What are different storage types - The Cloud Girl

Cloud SQL: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. Core Overview

Cloud SQL is a fully managed relational database service (RDBMS) on Google Cloud.

- Supported Database Engines: MySQL, PostgreSQL, and SQL Server.

- Editions (2026 Standards):

- Cloud SQL Enterprise: Standard performance and reliability.

- Cloud SQL Enterprise Plus: Enhanced performance, higher availability (99.99% for regional), and near-zero downtime maintenance.

- Use Cases: Web frameworks, structured data, existing applications that require standard SQL, OLTP workloads.

Cloud SQL Index Advisor

Cloud SQL Index Advisor automatically analyzes query patterns and recommends new indexes to improve performance. It identifies slow or inefficient queries, suggests optimal indexes, and can show the expected impact before applying changes. It helps reduce manual tuning and keeps databases performing efficiently.

2. High Availability (HA) and Replication

Understanding the difference between HA and Read Replicas is heavily tested on the ACE exam.

High Availability (HA)

- Purpose: Protection against zone failures. Provides reliability, not performance scaling.

- Architecture: Regional configuration. Provisions a Primary instance in one zone and a Standby instance in another zone within the same region.

- Failover: Automatic. If the primary zone goes down, the standby takes over.

Read Replicas

- Purpose: Read performance scaling (offloading read queries from the primary instance).

- Architecture: Can be in the same region or a different region (Cross-Region Read Replica).

- Failover: Manual. You must manually promote a read replica to become a standalone primary instance if needed for disaster recovery.

3. Backups and Recovery

- Automated Backups: Taken daily within a configurable backup window. Retained for up to 365 days.

- On-Demand Backups: Taken manually at any time.

- Point-in-Time Recovery (PITR): Allows you to restore an instance to a specific fraction of a second.

- Cloning: You can clone a Cloud SQL instance to create an exact, independent copy.

4. Scaling

- Vertical Scaling: Increasing the machine type (vCPUs and RAM). Requires a restart of the database instance.

- Horizontal Scaling: Using Read Replicas to scale read capacity. Cloud SQL does not natively horizontally scale for write operations (use Cloud Spanner or AlloyDB for massive write scale).

- Storage Auto-Increase: Cloud SQL can automatically add storage capacity as you approach your limit.

- Important Fact: Cloud SQL storage can scale up (requires downtime), but it cannot scale down.

5. Security and Networking

- Private IP: Instances can have a private, internal IP via Private Services Access (VPC Peering).

- Cloud SQL Auth Proxy: The Gold Standard for secure connections. It uses IAM for authentication and automatically handles SSL/TLS. No need to whitelist IP addresses when using the proxy.

- IAM Authentication: Allows users and service accounts to log in using their Google Cloud identity instead of static database passwords.

6. Maintenance

- Maintenance Windows: You define a specific day and time when Google can perform updates.

- Impact: Maintenance usually results in a brief period of downtime (minimized in Enterprise Plus edition).

7. Decision Tree for the ACE Exam

- Structured data / Relational? -> Cloud SQL or Spanner.

- Local/Regional scale? -> Cloud SQL.

- High performance PostgreSQL requirements? -> AlloyDB.

- Global scale or massive writes? -> Cloud Spanner.

- Petabytes of data / Data Warehousing / OLAP? -> BigQuery.

- Unstructured data / NoSQL? -> Cloud Firestore or Cloud Bigtable.

8. Migration and Administrative Tasks

- Database Migration Service (DMS): The primary tool for migrations from on-premises or other clouds to Cloud SQL.

- Import/Export: You MUST store the SQL/CSV file in a Cloud Storage (GCS) bucket first before importing it into Cloud SQL.

- Service Account Permissions: The Cloud SQL Service Account must have

roles/storage.objectVieweron the GCS bucket for imports.

9. Using Cloud SQL in a Spring Boot App (Example)

Connect to Cloud SQL (PostgreSQL) using its IP, just like a regular PostgreSQL instance.

spring:

datasource:

url: jdbc:postgresql://10.0.0.10/DB_NAME?currentSchema=SCHEMA_NAME

username: USER

password: PASSWORD

driver-class-name: org.postgresql.Driver

jpa:

hibernate:

ddl-auto: update

show-sql: true

When to Use Each Cloud SQL Connection Method

-

Private IP

Use it when your service runs inside a VPC (GKE, GCE, Cloud Run with VPC connector). Best security and lowest latency. No public exposure.

-

Cloud SQL Auth Proxy

Use for local development or when you want automatic IAM auth and secure TLS without managing certificates. Works anywhere but adds a sidecar/agent.

./cloud-sql-proxy INSTANCE_CONNECTION_NAME \ --port=5432 \ --credentials-file=key.jsonFor more details see Connect using the Cloud SQL Auth Proxy (Google Cloud Documentation).

-

Socket Factory (JDBC Connector)

Use in Java apps (Spring Boot) when you want secure IAM‑based connections without running the proxy. Common in Cloud Run and GKE.

spring: datasource: url: jdbc:postgresql://google/DB_NAME?socketFactory=com.google.cloud.sql.postgres.SocketFactory&cloudSqlInstance=INSTANCE_CONNECTION_NAME username: USER password: PASSWORD driver-class-name: org.postgresql.Driver

10. Exam Tip

- Private IP → Best for production inside a VPC (GKE, GCE, Cloud Run + VPC connector)

- Auth Proxy → Easiest secure option for local dev or simple setups

- Socket Factory → Ideal for Java apps needing secure IAM auth without running the proxy

11. External Links

- Cloud SQL - The Cloud Girl

- Introduction to Databases - The Cloud Girl

- Which Database should I Use - The Cloud Girl

Cloud Spanner: ACE Exam Study Guide (2026)

Image source: Vecta.io

1. Core Overview

- Database Type: Fully managed, enterprise-grade relational database (RDBMS) with global scale.

- Key Features: Horizontal scalability, strong global consistency, and high availability (up to 99.999% SLA).

- Language: Supports Standard SQL (Google Standard SQL) and PostgreSQL-dialect.

2. When to Choose Cloud Spanner (Exam Scenarios)

- Massive Scale: Your relational database exceeds Cloud SQL storage limits (typically > 64 TB) or requires tens of thousands of reads/writes per second.

- Horizontal Scaling: You need a relational database that can scale horizontally (by adding more nodes/PUs) for both reads and writes.

- Global Geography: You need a globally distributed database with strong consistency across regions (e.g., global financial ledger, worldwide inventory system).

- Graph and Relational: With Spanner Graph, you can now store and query graph data alongside relational data in the same database using the ISO GQL standard.

3. Cloud Spanner vs. Cloud SQL vs. AlloyDB

The ACE exam frequently tests your ability to choose between these two services.

Cloud SQL vs AlloyDB vs Cloud Spanner (ACE Summary)

| Feature | Cloud SQL | AlloyDB | Cloud Spanner |

|---|---|---|---|

| Scope | Regional | Regional | Global / Multi‑regional |

| Scaling | Vertical (downtime) | Horizontal read scaling (read pools) | Horizontal read + write scaling |

| Performance | Standard | Much faster than Cloud SQL | Highest, globally consistent |

| Compatibility | MySQL / PostgreSQL / SQL Server | PostgreSQL‑compatible | Spanner SQL |

| Availability | HA optional (regional) | HA with primary + read pools | Built‑in global HA |

| Storage | Limited | High-performance, auto‑scaling | Virtually unlimited |

| Best For | Typical web apps, standard DB workloads | High‑performance transactional apps | Massive, global, mission‑critical systems |

4. Architecture and Compute

- Processing Units (PUs) and Nodes: Compute capacity is measured in PUs or nodes. 1 node = 1000 PUs.

- Scaling and Storage Limits (2026 Standards):

- Zero Downtime: Scaling nodes/PUs up or down is instantaneous and happens while the database is serving traffic.

- Storage Limit: Each 1,000 PUs (1 node) now supports up to 10 TB of storage in modern configurations. If your database grows beyond this, you MUST add more nodes even if CPU usage is low.

- Interleaved Tables: A unique Spanner feature where a child table’s rows are physically stored with the parent table’s rows. This drastically improves performance for related data joins by ensuring data is co-located on the same split.

- High Availability (SLA):

- Regional: 99.99% availability.

- Multi-regional: 99.999% availability (the famous five nines).

5. IAM and Security

- Access Control: IAM roles can be granted at the project, instance, or database level.

- Common Roles:

roles/spanner.admin: Full control of all Spanner resources.roles/spanner.databaseAdmin: Manage databases and schema, but cannot create/delete the Spanner instance itself.roles/spanner.databaseReader: Read data and schema.roles/spanner.viewer: View instance and database metadata (read-only).

- Security Features: Integrates with Cloud Audit Logs and supports CMEK (Customer-Managed Encryption Keys).

6. Backups and Recovery

- Point-in-Time Recovery (PITR): Allows you to read data from a specific microsecond in the past. The maximum retention period for PITR is 7 days.

- Backups: You can take on-demand backups of your database. These backups are retained for up to 1 year and are stored in the same geographic location as the database instance.

- Export/Import: Uses Dataflow to move data between Spanner and Cloud Storage (Avro or CSV formats).

7. Interacting with Cloud Spanner (CLI)

For the ACE exam, know the gcloud spanner command group:

gcloud spanner instances list: List all instances in a project.gcloud spanner databases create [DB_NAME] --instance=[INSTANCE_NAME]: Create a new database.gcloud spanner instances update [INSTANCE_NAME] --nodes=[COUNT]: Scale an instance horizontally.

8. External Links

- Youtube - Andrew Brown - GCP ACE

- Cloud Spanner - The Cloud Girl

- Introduction to Databases - The Cloud Girl

- Which Database should I Use - The Cloud Girl

Firestore: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. What Firestore Is

Firestore is:

- A NoSQL document database

- Stores data as collections to documents to fields

- Serverless (auto-scaling, no servers to manage)

- Multi-regional by default

- Supports real-time listeners

- Strongly consistent

Firestore is the next generation of Cloud Datastore.

2. Firestore Modes

| Feature | Native Mode | Datastore Mode |

|---|---|---|

| Best For | Mobile & Web apps | Server-side workloads |

| Real-time | Yes (Listeners) | No |

| Offline | Yes (Caching) | No |

| Queries | Collection Group Queries | No Collection Group Queries |

| Consistency | Strong Consistency | Strong Consistency (2026 standard) |

| Use Case | Real-time dashboards, chat | High-throughput backend services |

ACE Tip: Choose Native Mode unless you specifically need backwards compatibility with legacy Cloud Datastore applications.

3. Data Model

Firestore stores data as:

- Collections

- Documents

- Fields

- Subcollections

Key points:

- Documents can contain subcollections

- Collections do not contain other collections directly

- Documents are limited to 1 MB

4. Consistency and Transactions

Firestore provides:

- Strong consistency for reads, writes, and queries

- ACID transactions (document-level)

- Automatic retries for transactions

Two write types:

- Transactions: read and write, atomic

- Batch writes: write-only, atomic

ACID — Atomicity, Consistency, Isolation, Durability — four properties that ensure database transactions are processed reliably and maintain data integrity even in the presence of failures.

- Atomicity - All operations in a Firestore transaction succeed or none do. If any write fails, Firestore rolls back the entire transaction.

- Consistency - Firestore ensures that any committed transaction leaves the data in a valid state according to your rules (security rules, schema expectations, constraints you enforce in code).

- Isolation - Transactions in Firestore run with snapshot isolation. Each transaction sees a consistent snapshot of the data and is retried automatically if conflicts occur.

- Durability - Once Firestore commits a write, it is stored redundantly across multiple Google data centers, ensuring it survives crashes or outages.

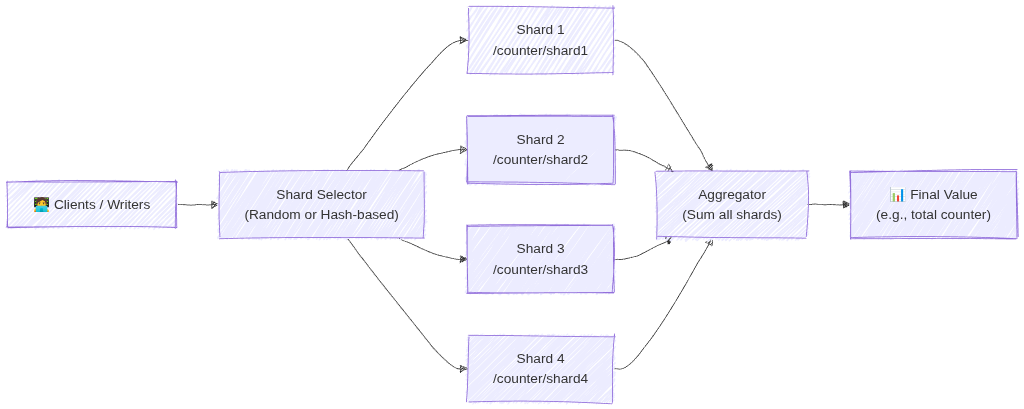

5. Write Limits (Major Exam Trap)

Firestore enforces:

- 1 write per second per document

- High-frequency writes require:

- Sharded counters

A counter is split into multiple shard documents. Each write updates a random shard and reads combine all shard values. This avoids hitting the write limit of a single document and prevents hotspots during heavy traffic.

- Randomized document IDs

Firestore auto generated IDs distribute documents evenly across storage. Randomized keys avoid sequential hotspots and improve write throughput for high volume collections.

- Sharded counters

This appears frequently in scenario questions.

6. Indexing

Firestore automatically creates:

- Single-field indexes

You can create:

- Composite indexes for complex queries

If a query needs an index:

- Firestore returns an error with a link to create it

7. Security

Firestore uses two layers of security:

7.1. IAM

- Controls administrative access

- Example: creating indexes, backups, exports

7.2. Security Rules

- Control data-level access

- Based on:

- User identity

- Document data

- Request time

- Custom conditions

ACE exam often tests the difference.

8. Networking and Access

Firestore is accessed via:

- HTTPS API

- Client SDKs (web, iOS, Android)

- Server SDKs

Firestore is not mounted like a filesystem.

9. Offline Support

Firestore supports offline caching for:

- Web

- iOS/Android

Datastore mode does not support offline mode.

10. Real-Time Updates

Firestore supports:

- Real-time listeners

- Automatic push updates to clients

Datastore mode does not support this.

11. Scaling and Performance

Firestore scales automatically using:

- Horizontal partitioning (sharding)

To avoid hotspots:

- Use randomized document IDs

- Avoid sequential keys

11.1 How Firestore Sharding Works

Firestore sharding spreads write operations across multiple shard documents instead of sending all writes to a single document. Each client writes to a randomly selected (or hash‑based) shard, which prevents write hotspots and avoids the 1‑write‑per‑second limit on individual documents. When reading, the application aggregates all shard documents (e.g., summing counters) to produce the final result. This allows Firestore to scale write throughput horizontally.

Image source: Own work (Mermaid diagram).

For more details see What is Database Sharding? - Anton Putra - Youtube.

12. Queries and Aggregations (2026 Update)

Firestore supports:

- Range, Compound, and Collection group queries.

- Server-side Aggregations:

COUNT(),SUM(), andAVG().Aggregations are highly efficient;

COUNT()costs 1 index read per 1,000 documents. - Vector Search: Supports similarity searches (KNN) for GenAI/LLM embeddings.

13. Backups and Exports

Firestore supports:

- Scheduled backups

- On-demand backups

- Stored in Cloud Storage

- Can restore to a new database

14. Data Retention and Recovery (Critical for ACE)

14.1. TTL (Time To Live)

- Automatically deletes documents based on a timestamp field.

- Used for cost optimization and cleaning up stale data (e.g., sessions, logs).

- Deletion typically happens within 24 hours of expiration.

14.2. PITR (Point-in-Time Recovery)

- Allows data recovery to any version from the last 7 days.

- Protects against accidental deletion or corruption.

- Must be explicitly enabled at the database level.

14.3. Named Databases

- You can create multiple Firestore databases in one project (e.g., (

default),test-db,prod-db). - Databases can be in different locations and even different modes (Native vs. Datastore).

15. Using in a Spring Boot App (Example)

Add the dependency: com.google.cloud:spring-cloud-gcp-starter-data-firestore.

@Service

public class FirestoreService {

private final Firestore db;

public void addDocument(String coll, String id, Map<String, Object> data) {

db.collection(coll).document(id).set(data);

}

public DocumentSnapshot getDocument(String coll, String id) throws Exception {

ApiFuture<DocumentSnapshot> query = db.collection(coll).document(id).get();

return query.get();

}

}

16. Common ACE Exam Scenarios

- Scenario: Automate deletion of 30-day-old logs? → Use TTL on a timestamp field.

- Scenario: Recover data from a mistake made 4 hours ago? → Use PITR (7-day window).

- Scenario: Isolate Dev/Prod data in one project? → Use Named Databases.

- Scenario: Count 1 million documents cheaply? → Use the native

COUNT()aggregation query. - Scenario: Build a GenAI chatbot with Firestore? → Use Vector Search for embeddings.

- Scenario: Migrate legacy Datastore app? → Firestore in Datastore mode.

- Scenario: Native vs Datastore mode? → Choose Native for mobile/web (real-time/offline).

- Scenario: Change database location after creation? → Not possible (must recreate).

17. Quick Summary Table

| Topic | Key Points |

|---|---|

| Data model | Collections to Documents to Fields (Max 1 MB) |

| Write limit | 1 write/sec per document |

| Consistency | Strong Consistency |

| Security | IAM (Admin) + Security Rules (Data Access) |

| Recovery | PITR (7 days) + Scheduled Backups (GCS) |

| Cleanup | TTL (Time-to-Live) via timestamps |

| Modes | Native (Real-time/Offline) vs Datastore (High-volume server) |

18. Final ACE Tips

- Firestore is the default NoSQL choice for most GCP apps.

- TTL = Cost savings (auto-delete).

- PITR = Disaster recovery (7-day window).

- Named Databases allow multiple DBs per project.

- Native mode is for mobile/web; Datastore mode is for high-volume server apps.

- Location is permanent once the database is created.

- Aggregations (

COUNT,SUM,AVG) are now built-in and server-side.

19. External Links

- Firestore - The Cloud Girl

- Introduction to Databases - The Cloud Girl

- Which Database should I Use - The Cloud Girl

Cloud Bigtable: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. Core Overview

- Database Type: Fully managed, wide-column NoSQL database.

- Scale: Designed for massive datasets (Terabytes to Petabytes).

- Performance: Offers single-digit millisecond latency and extremely high throughput for both read and write operations.

- Compatibility: Natively exposes an Apache HBase API.

2. When to Choose Cloud Bigtable (Exam Scenarios)

- Time-Series Data: IoT sensor readings, server telemetry, and monitoring metrics.

- High Throughput / Low Latency: Ad-tech, financial market data, and massive multiplayer game state or analytics.

- Rule of Thumb: If an exam question explicitly mentions “sub-millisecond latency,” “petabytes of data,” or “HBase compatibility,” Bigtable is highly likely the correct answer.

3. When NOT to Choose Cloud Bigtable

- Relational Data: It does not support standard SQL queries, complex joins, or multi-row transactions.

- Small Datasets: It is not cost-effective or necessary for datasets under 1 Terabyte. Cloud Firestore, Cloud SQL, or Cloud Spanner are better suited for smaller workloads.

4. Architecture and Performance

- Compute and Storage Separation: Nodes handle compute, while data resides on Colossus. This allows you to scale nodes up or down with zero downtime without migrating data.

- Storage Types:

- SSD: Default choice. For high-performance, low-latency workloads.

- HDD: For massive amounts of data (>10 TB) where latency is not critical (e.g., batch processing).

- Immutability: You cannot change the storage type (SSD/HDD) after the instance is created.

- Row Key Design (Tested):

- Avoid Hotspotting: Do NOT use sequential IDs or timestamps as the start of a row key.

- Best Practice: Use “tall and skinny” tables. Use hashed values, reverse domain names (e.g.,

com.google.cloud), or salted keys to ensure data is distributed evenly across nodes.

5. Command Line Operations

- The

cbtTool: While you usegcloudto manage the Bigtable instances and clusters, the ACE exam expects you to know that you interact with the actual tables and data using thecbtcommand-line tool. - Common Commands:

cbt createtable,cbt read,cbt set.

6. High Availability and Replication

- Replication: Bigtable provides high availability by replicating data across multiple clusters in different zones or regions.

- App Profiles: Used to manage how your applications connect to a cluster.

- Single-Cluster Routing: Directs traffic to one cluster (consistent, but no automatic failover).

- Multi-Cluster Routing: Automatically fails over to the nearest available cluster (High Availability).

7. Administrative Tasks and Scaling

- Scaling: You can increase or decrease the number of nodes in a cluster via the Console or

gcloudwhile the cluster is serving traffic (zero downtime). - Monitoring: Use Key Visualizer (a tool within the GCP Console) to identify hotspots and troubleshoot performance issues visually.

- Backups: Bigtable allows you to take Backups of tables. These are stored within the Bigtable service (in the same region), NOT in Cloud Storage. They can only be used to Restore to a new table.

8. External Links

- Cloud Bigtable - The Cloud Girl

- Introduction to Databases - The Cloud Girl

- Which Database should I Use - The Cloud Girl

BigQuery: ACE Exam Study Guide (2026)

Image source: Google Cloud Documentation

1. Core Overview

- Database Type: Fully managed, serverless enterprise data warehouse (EDW).